AI and Environmental Concerns

AI is no longer abstract—it has a footprint. This page is meant to ground the conversation in facts and tradeoffs, not fear.

TL;JS (Topline Summary)

- AI is becoming a major energy player, with data centers and AI workloads expected to consume a growing share of global electricity over this decade.

- Fixes are underway: cleaner energy, more efficient chips, smarter data center design, and tools that cut waste.

- Transparency and sensible regulation are essential so the benefits of AI don't come with hidden environmental costs.

Why the Concern Matters

- Large AI models require significant computing power, which translates into higher electricity demand.

- That demand often lands in specific regions, putting pressure on local grids, water supplies, and communities.

- Without consistent reporting, it's hard for the public to see how much energy is being used, or how fast it's growing.

What's Driving the Surge?

- Rapid AI growth: More powerful models and wider use (search, office tools, creative apps, infrastructure) mean far more computation than even a few years ago.

- Slowing efficiency gains: Data centers have become much more efficient over the past decade, but the easy wins are largely taken; further gains are harder and slower.

- Grid constraints: In some areas, power availability and transmission capacity are now a key bottleneck to building new data centers.

How the Industry Is Responding

- Cleaner power sources: Many large tech companies are signing long-term contracts for wind, solar, and other low-carbon energy, and exploring options like advanced nuclear.

- Smarter energy timing: Some workloads are shifted to times and places where renewable energy is abundant or demand on the grid is lower. (Think of utilities offering discounts for running heavy appliances at night—that logic is being applied at data-center scale.)

- More efficient hardware: New AI chips and server designs do the same work with fewer watts, and progress here is still active.

- AI-for-efficiency: AI itself is used to optimize cooling systems, balance workloads, and cut waste in data centers.

- Transparency push: Researchers, companies, and policymakers are working on clearer standards for reporting energy use, emissions, and water consumption from AI.

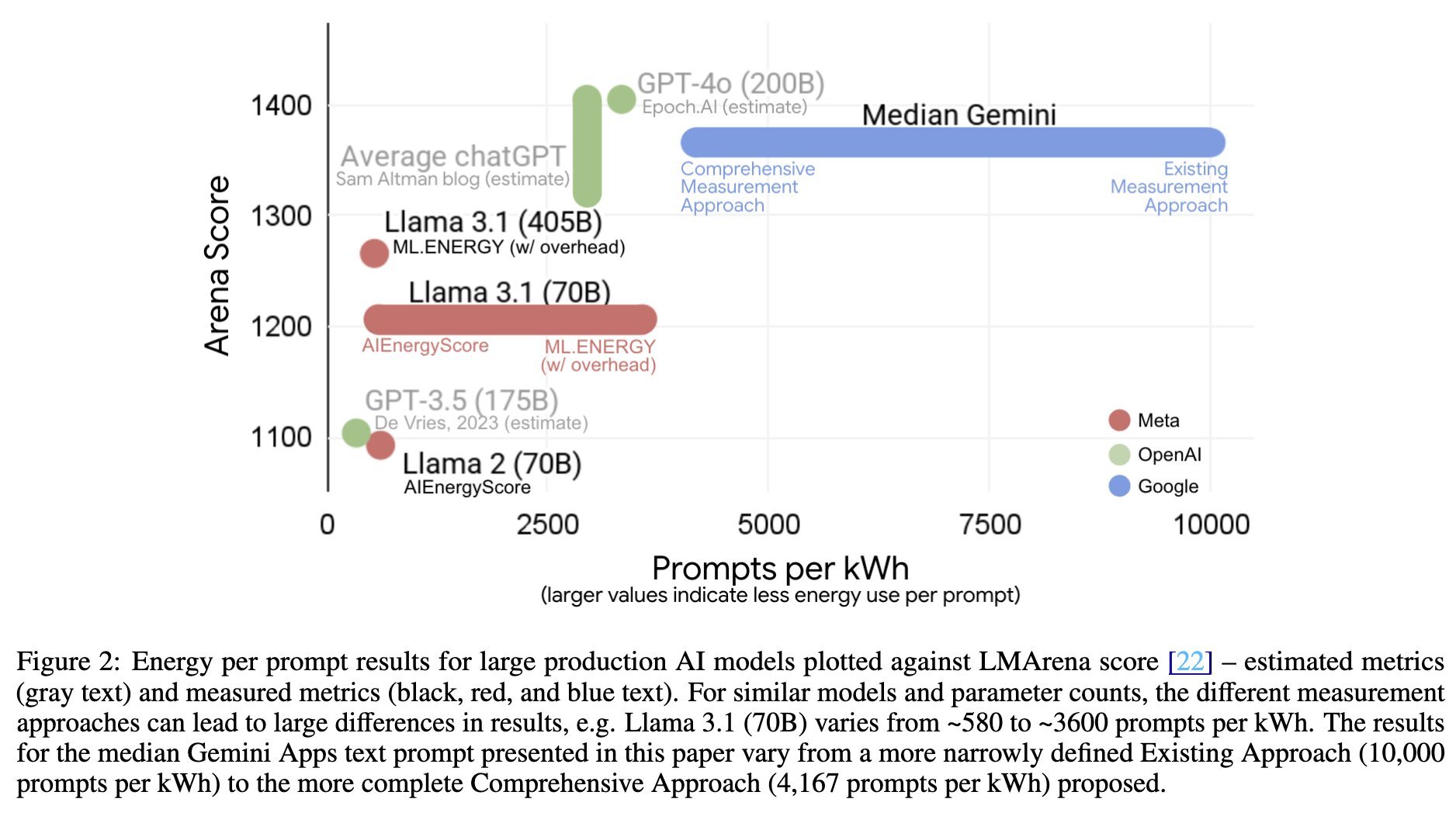

Read the latest from Google's Blog on energy usage of Gemini. Chart below.

Join the Conversation

Is AI's energy use outpacing our ability to make it cleaner—or will design and policy catch up? What should “responsible AI” mean when it comes to power, water, and local communities?

Hit reply and tell me:

- What worries you most (if anything) about the energy side of AI?

- What would you want companies or policymakers to be required to disclose?

Discussion board coming soon — we'll be adding a space for comments and conversation here.